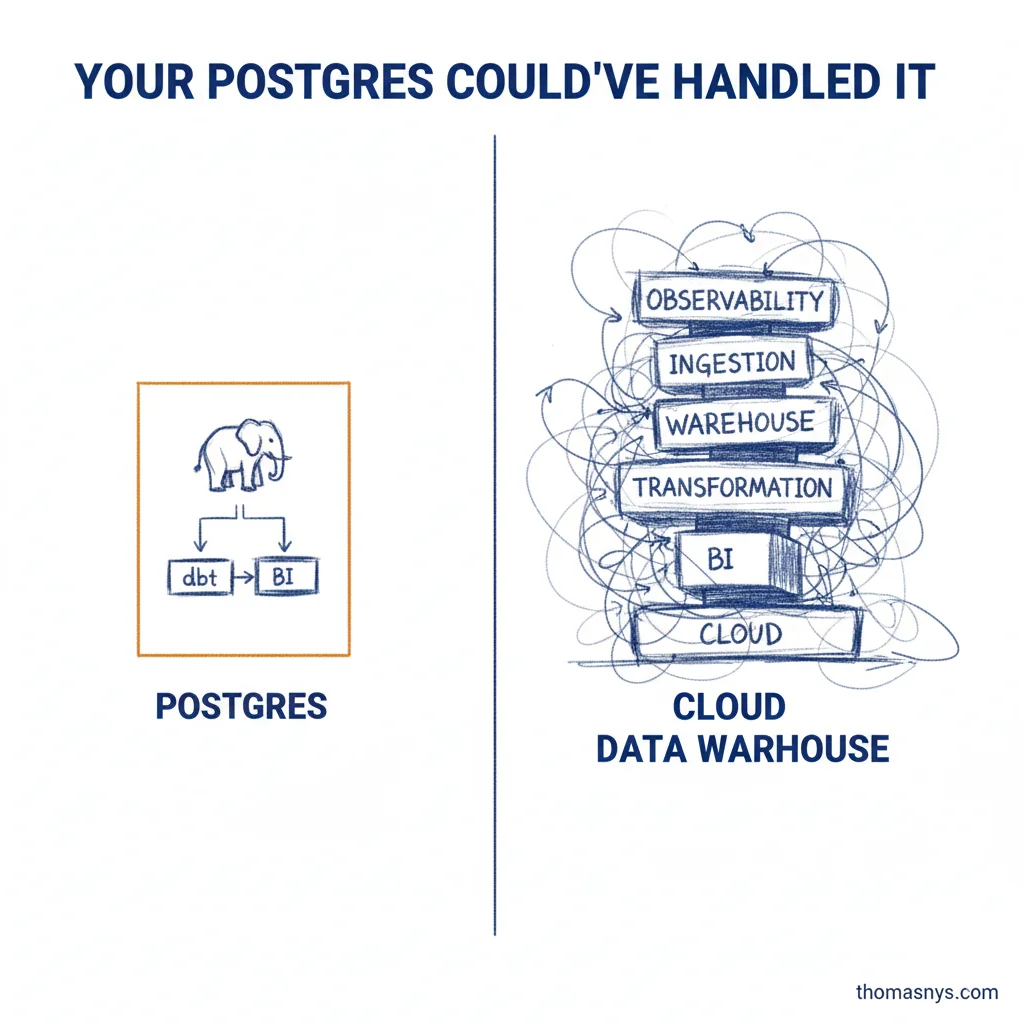

You’re paying EUR3K/month for a cloud warehouse. Your Postgres could’ve handled it.

I see this regularly. A growing company with 15 employees, 200GB of data, and someone convinced them they need a cloud warehouse + dbt + Fivetran + Looker. That’s EUR3K/month before anyone writes a query.

The problem isn’t the tools. The tools are fine. The problem is buying them before you have the problems they solve.

Postgres as your analytical warehouse, dbt on top, and a decent BI tool will carry a 15-person company further than most people think. Not glamorous. Gets the job done. I’ve seen a 500GB partitioned Postgres warehouse serve 50 analysts without breaking a sweat.

So when do you actually need a cloud data warehouse? When Postgres-as-warehouse hits its ceiling:

- Your analytical queries on 100M+ rows are taking minutes instead of seconds (and even then)

- You can’t scale compute independently from storage - one heavy query slows everything down for everyone

- You’re managing partitions, vacuuming, and index tuning instead of building data products

Until then, you’re solving problems you don’t have yet.

I probably could’ve saved three clients six-figure tool investments last year by asking one question earlier: “What’s actually breaking right now?”

If the answer is “nothing, but we want to be ready” - you’re not ready. You’re over-engineering.

And if you’re worried about being stuck later: migrating from Postgres to a cloud warehouse when you actually need it is a well-trodden path. It’s a solved problem.

Can you justify the ROI of every tool in your current data stack?