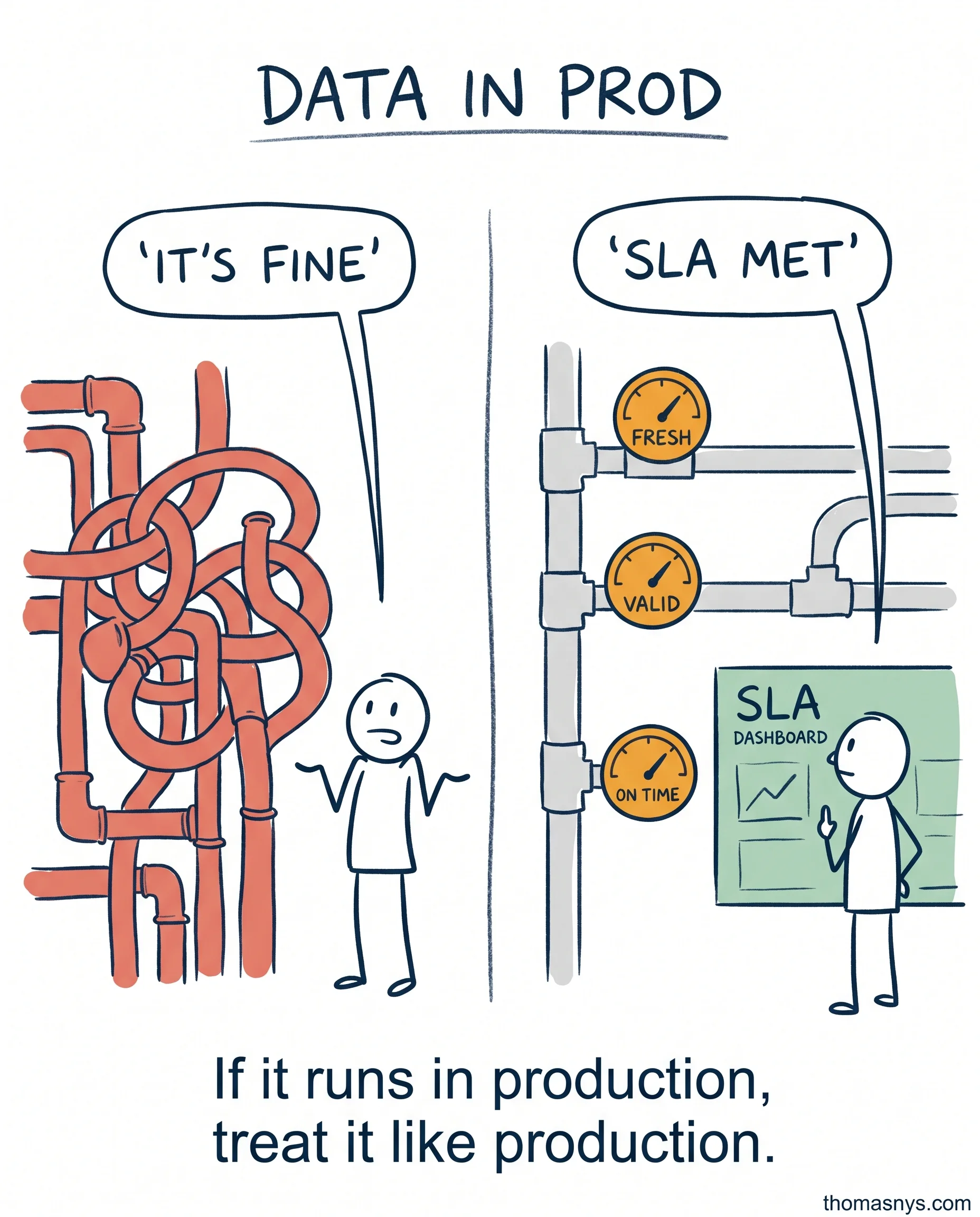

Your data pipelines run in production. But nobody treats them like production systems. That’s about to change.

Software engineering figured this out 15 years ago. You set SLAs. You monitor them. When they breach, someone gets paged. You run post-mortems. You track reliability budgets.

Data platforms? Most teams still find out something’s broken when a stakeholder complains.

The Data SRE role is emerging for exactly this reason. It treats data pipelines like production services - because they are. Freshness SLAs. Completeness thresholds. Latency targets. Row count monitoring. Error rate tracking. All measured, all alerted on.

Once a team commits to this, the conversations change. Stakeholders stop asking why numbers are off, because the team already knows. Engineers stop guessing, because there’s a post-mortem from last week’s breach to point to.

The shift is simple but uncomfortable: your data products need SLAs, incident response playbooks, and post-mortems. Just like your APIs.

Start small. Pick your three most critical data products. Define freshness and completeness SLAs. Monitor them. When they breach, treat it like a production incident - not a Slack thread that dies in 20 minutes.

Do your data products have SLAs? If not, you’re running production systems without a safety net.