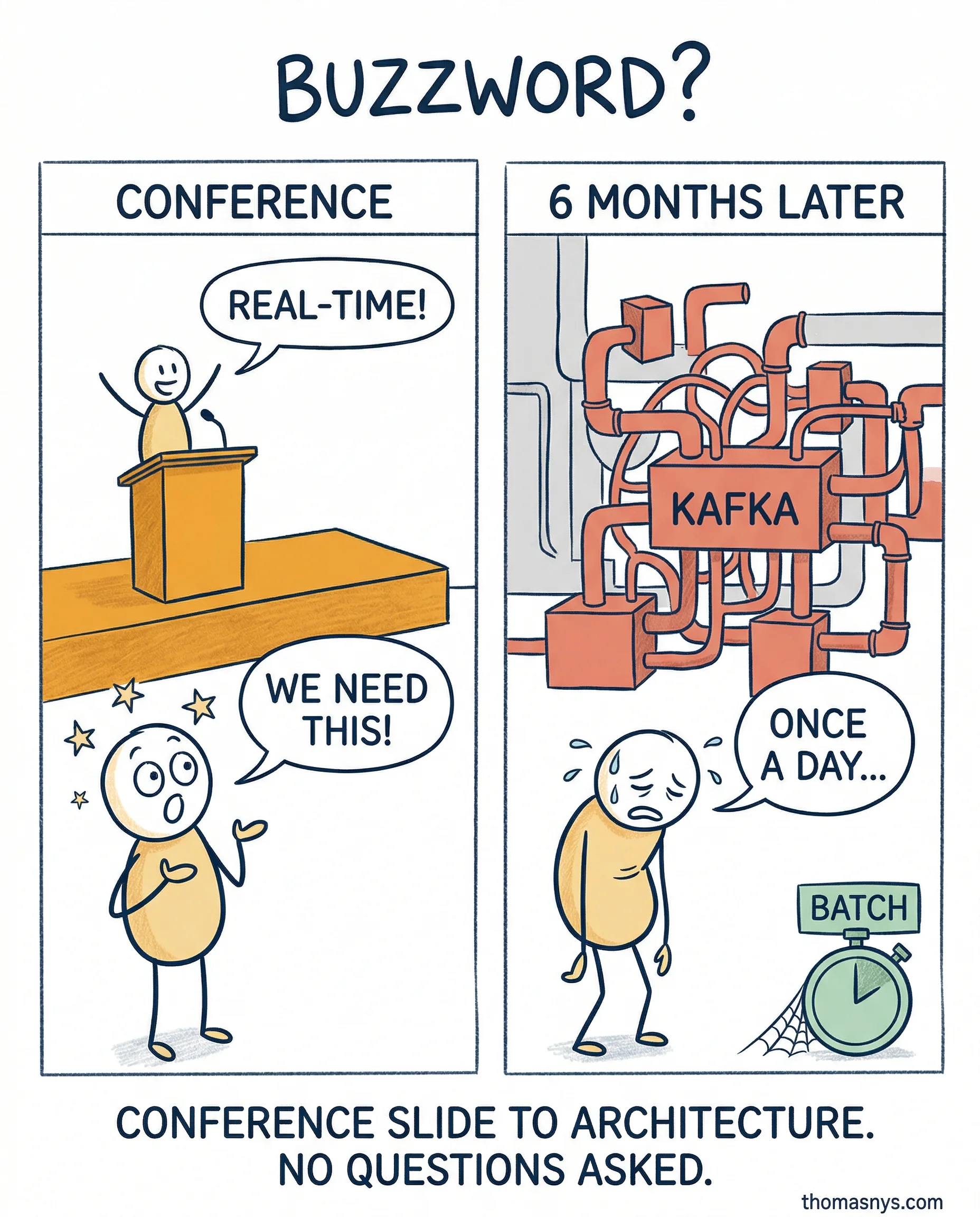

90% of “we need real-time” conversations end with a batch job running at midnight.

I’ve tracked this pattern across clients for two years now. It almost always starts the same way. Someone comes back from a conference. “Real-time” was in every other talk title. Two weeks later it’s in a technical roadmap. Nobody asked what problem it solves.

The team builds streaming infrastructure. Kafka, event processing, the works. Months of engineering. Then someone finally asks the analysts how often they check the dashboards. Once a day. Maybe twice.

This isn’t a technology problem. It’s a buzzword-to-architecture pipeline. Conference talk becomes exec priority becomes engineering project - and nobody in that chain validates the actual requirement.

I fell for it too. Built a streaming pipeline for data nobody looked at more than once a day. Four months of work that a 6 AM batch refresh would’ve replaced at 10% of the cost. That’s my scar.

Real-time has real use cases. Fraud detection. Dynamic pricing. Operational alerts. But those are specific, bounded problems - not a default architecture choice because it sounds modern.

The question I now ask before any architecture decision: “Where did this requirement come from? A user need or a conference slide?”

Is “real-time” the new “big data” in terms of things people want but can’t justify?