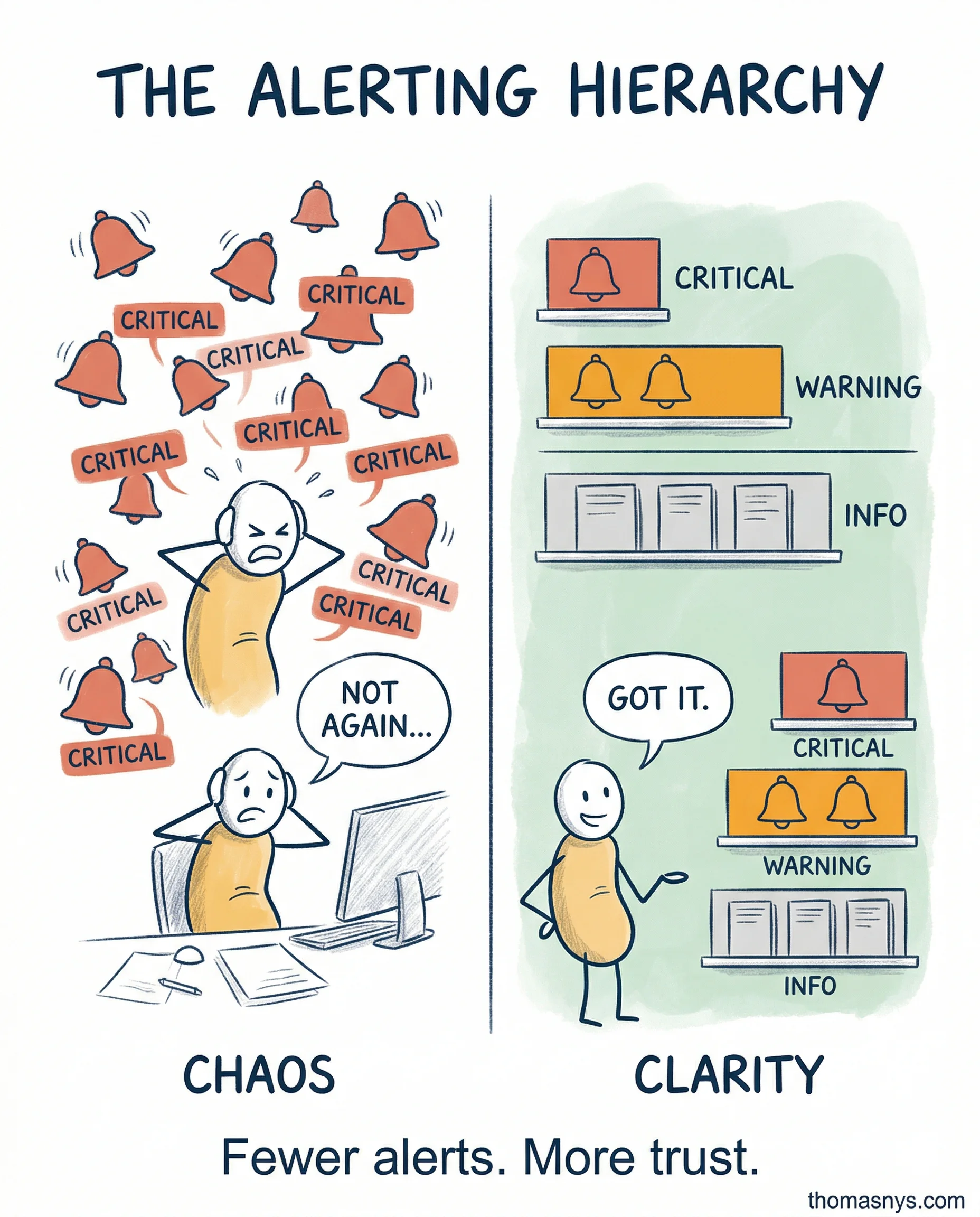

If every alert is critical, none of them are.

More alerts meant better coverage. I was wrong. What it actually meant was that my team stopped reading them.

Here’s the classification that changed things for us.

Critical: business is losing money right now. Revenue reporting is down. Wake someone up. These should happen fewer than 5 times per month. If you’re above that, you’ve mis-categorized.

Warning: needs attention within hours, not minutes. Pipeline running slower than usual. Nobody loses sleep, but someone picks it up in the morning.

Info: no action needed. Scheduled maintenance completed. A log entry, not an interruption.

Most alert fatigue traces back to classification, not coverage gaps. When a team gets 30 “critical” pings a week, they start ignoring all of them. That’s when the real incident slips through.

I used to alert on everything. Now I delete alerts nobody acted on in 30 days.

One channel. Clear ownership. Ruthless classification.

What’s your critical-to-noise ratio right now - and does your team still trust the alerts they get?