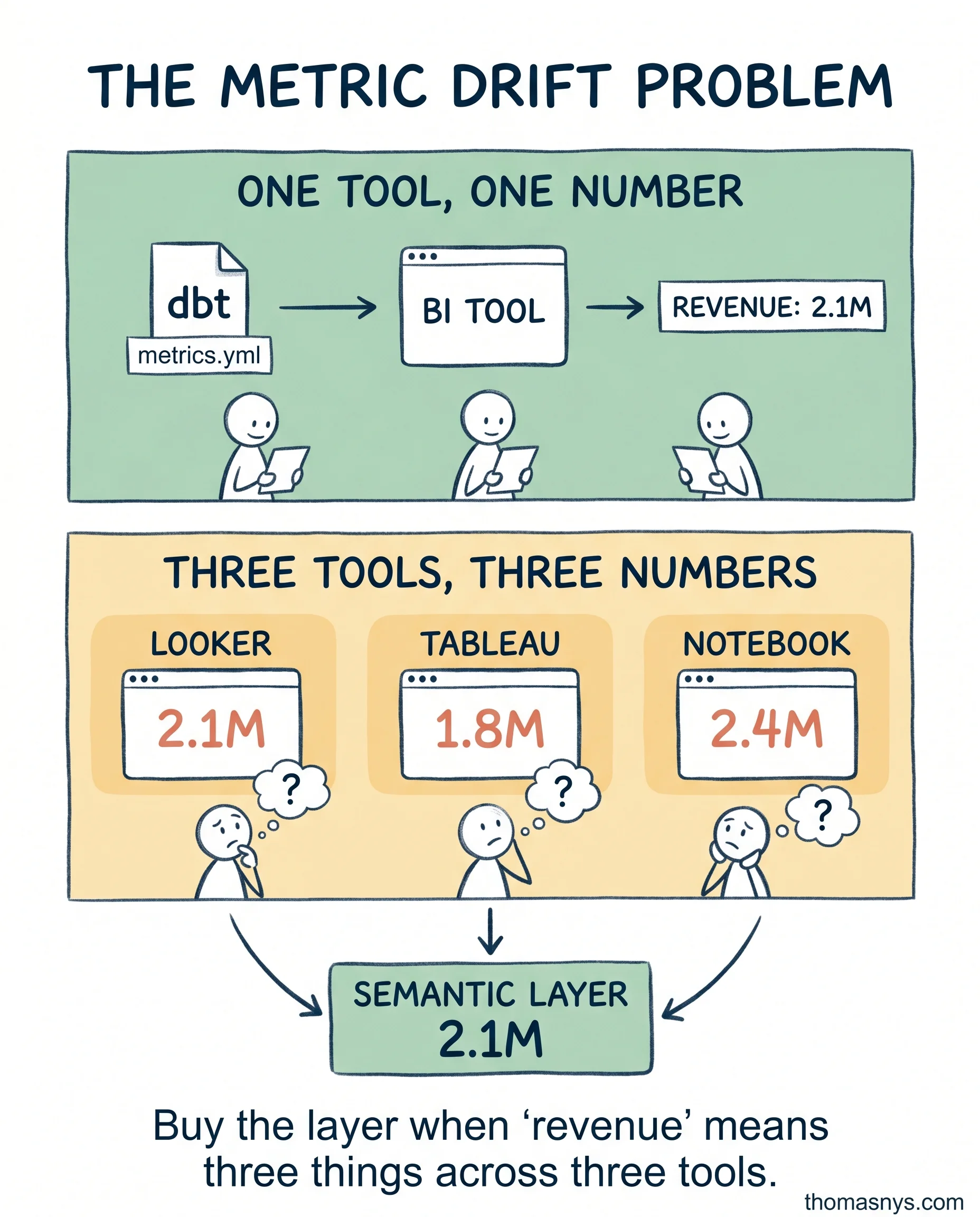

A semantic layer is a consistency tool. Most teams buy it for speed and end up disappointed.

A common pitch: “centralize your metrics, serve them faster, scale your BI.” Speed is doing a lot of work in that sentence. The semantic layer doesn’t make your warehouse faster. It makes your metric definitions identical across every tool that queries them.

That’s a real problem, but a specific one. It shows up when:

- Multiple BI tools consume the same metrics and produce different numbers

- AI agents query metric definitions programmatically and need a single source of truth

- More than one analytics team writes their own SQL for the same KPI

If none of those describe your team, you don’t have the consistency problem the semantic layer solves. A clean dbt project with documented metrics covers it. One place to define a metric. One place to update it. Done.

Where teams burn money: paying 800-1200/month for a semantic layer serving four users on one BI tool, then spending three months migrating dbt metrics into the layer’s DSL. The migration tax dwarfs the license fee.

The honest test before you sign:

- How many BI tools actively query the same metric definitions?

- How many AI agents or programmatic consumers read your metrics?

- How many analytics teams write their own SQL for shared KPIs?

Most SMEs don’t cross the threshold for a semantic layer until they’re running two BI tools or wiring agents into their metrics. Before that, dbt is enough.

If a semantic layer migration took 3 months of your team’s time, would it pay back in year one?