Two-engineer data teams keep picking Airflow because it’s “industry standard.” Six months in, half the DAGs are commented out.

I see the same pattern at scaleups. Two data engineers, two months of runway pressure, an Airflow deployment that’s already eating one of their weeks. The pitch was solid: dynamic DAGs, huge community, “what every serious team runs.” The reality is the team’s bottleneck isn’t expressiveness. It’s headcount.

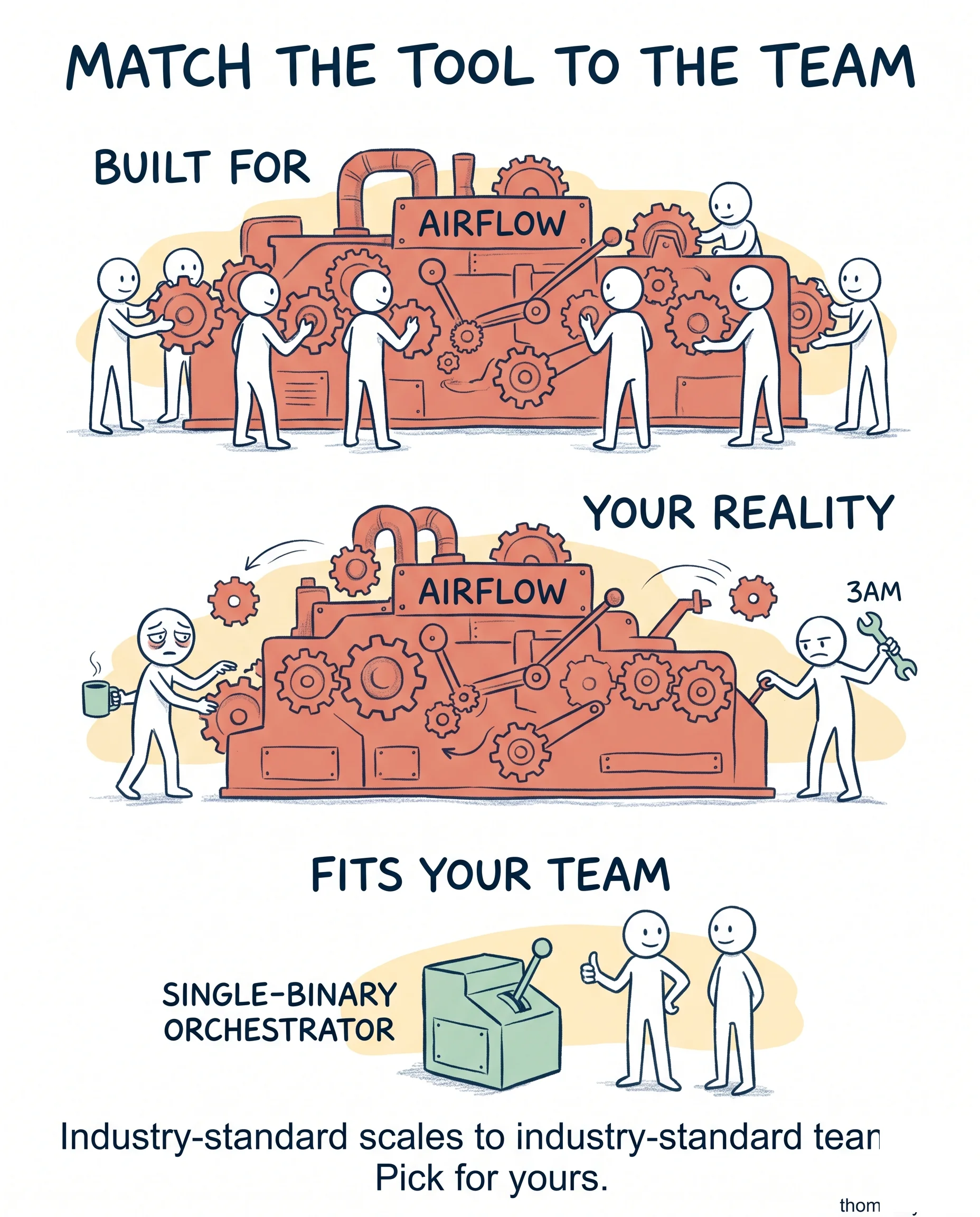

Industry-standard tools are built for industry-standard teams. Airflow scales to thousands of pipelines because Airbnb had a platform team of eight maintaining it. Your two engineers don’t.

What I see go better at this stage:

- Tools with single-binary or single-container deployments (Kestra, Dagster, Prefect Cloud) over self-hosted Airflow

- Replay-from-checkpoint as a built-in, not a coding exercise

- A UI that shows “what’s currently running” without SSH’ing into a worker

- Local dev that’s one command, not a docker-compose with five services

- An upgrade path the team can execute in an afternoon

These trade features for operability. When your data team fits in one room, that trade pays back the first time something breaks at 3am.

The honest counter: in three years when you’ve grown to four data teams, you’ll probably move to Airflow anyway. That migration is real work but it’s cheaper than three years of fighting Airflow with two engineers.

Match the tool to the team you have today. The team you might be in three years can buy something else.

If your data team is under three engineers, why did you pick the tool you picked?