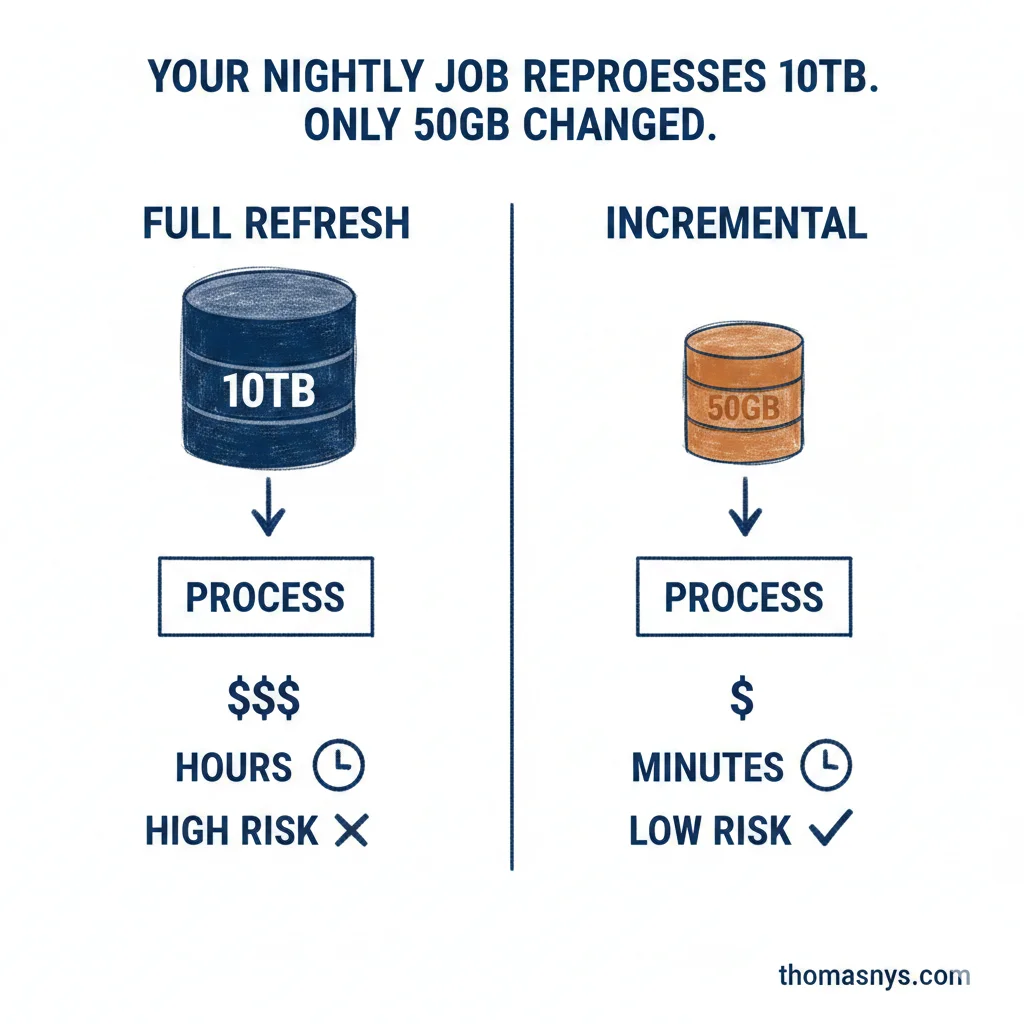

Your nightly job reprocesses 10TB. Only 50GB changed. You’re burning money and adding risk.

This is the incremental processing pattern. Process only what changed.

Two main approaches: Change Data Capture (CDC) captures every insert, update, and delete in near real-time - great when you need tight sync with your source systems. Daily deltas compare snapshots and process the differences - simpler to implement, works fine when overnight freshness is enough. Pick based on how fresh your downstream consumers actually need the data, not what sounds more impressive.

Why it matters:

Compute costs drop. You’re not churning through terabytes that haven’t changed since last week.

Risk drops. Touch less data at a time, fewer things can break. When something does break, recovery is faster because you’re not reprocessing everything since 2019.

Pipeline runtime shrinks. Hours become minutes when you skip unchanged data.

dbt makes this easy with incremental models. Most modern orchestrators support it. The tech is the simple part. Getting teams to let go of full refreshes because “that’s how we’ve always done it” - that’s where you’ll spend your energy.

Start with your largest, slowest pipelines. Ask: what percentage actually changes between runs? If the answer is under 20%, you’ve got a candidate.

Which of your pipelines are full-refresh when they could be incremental?