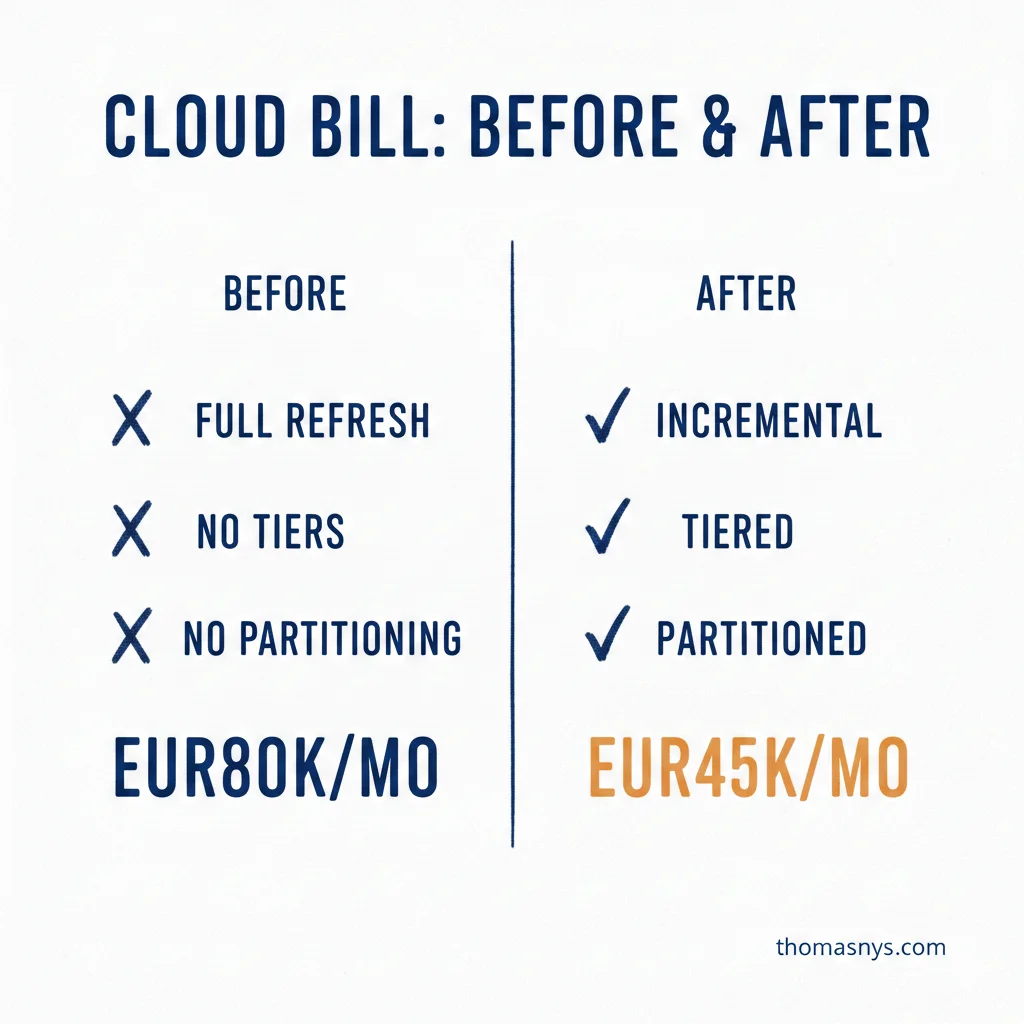

This company cut their cloud data bill from EUR80K to EUR45K in 6 weeks. No functionality lost.

Week one was the audit. And honestly, the findings were almost embarrassing. Full-refresh jobs running on tables that changed 0.1% daily. No partitioning on the largest tables. Hot storage for data nobody had queried in 18 months.

This isn’t unusual. I see this pattern at most scaleups that grew fast. The pipelines were built for speed-to-market. Nobody went back to check if they were efficient. Why would you? Things worked.

Weeks 3-4 we converted the biggest offenders to incremental loads. Added partitioning to the tables that mattered. It’s not glamorous work, but each change dropped the bill measurably.

Weeks 5-6 we set up tiered storage. Hot data stays fast. Warm data moves to cheaper compute. Cold data archives. Basic stuff, but nobody had done it.

The result: EUR35K/month saved. That’s EUR420K/year. For six weeks of focused work.

None of this is hard. It just needs somebody who tracks and solves it.

When was the last time someone audited your cloud data spend? What did they find?