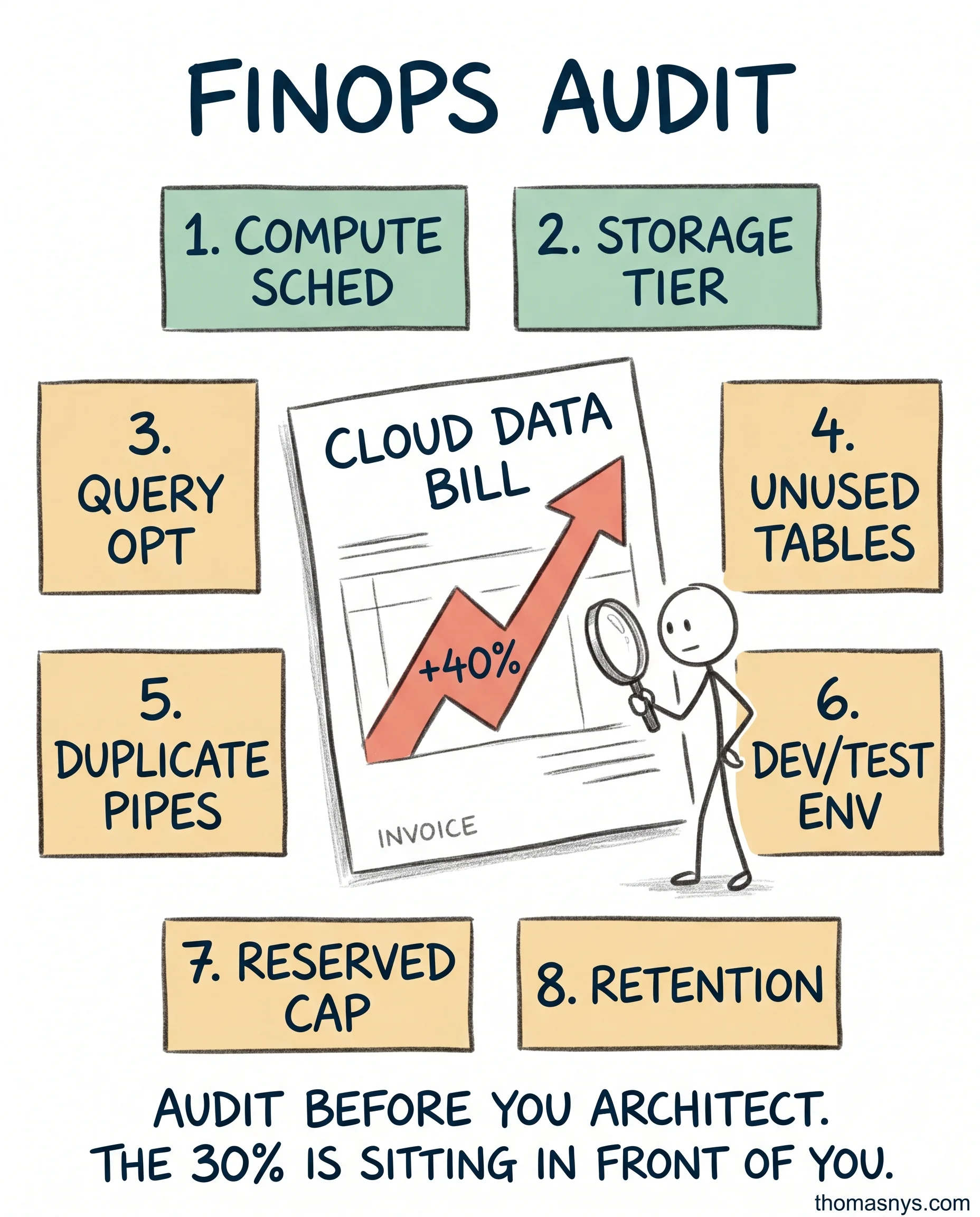

Your cloud data bill grew 40% last year. Your revenue didn’t.

This conversation happens at almost every scaleup I work with. Snowflake, BigQuery, Databricks - the bill compounds quietly until finance flags it. Then everyone scrambles for a “cost optimization initiative.”

Skip the initiative. Run an audit. Eight places to look:

- Compute scheduling. Are warehouses running 24/7 when nobody’s querying overnight? Auto-suspend after 1 minute of idle.

- Storage tiering. Cold data on hot storage is wasted spend. Move historical partitions to cheaper tiers.

- Query optimization. The top 10 most expensive queries usually account for 40% of compute. Rewrite them.

- Unused tables. Run a lineage scan. Tables with zero reads in 90 days are candidates for deletion.

- Duplicate pipelines. Two teams built the same customer rollup independently. You’re paying twice.

- Dev and test environments. Almost always over-provisioned and never spun down. Quick win.

- Reserved capacity. If your usage is predictable, reserved is 30-40% cheaper than on-demand.

- Data retention policies. You’re keeping 7 years of event-level logs because nobody set a policy.

Compute scheduling and storage tiering alone cut 30-40% of waste at most clients I’ve seen.

The trap: chasing big architectural changes (migrate to a different warehouse, rebuild the platform) before doing this audit. The 30% sitting in front of you is cheaper to fix than the 50% behind a 6-month migration.

Audit first. Architect second.

When was the last time someone audited your data infrastructure costs?