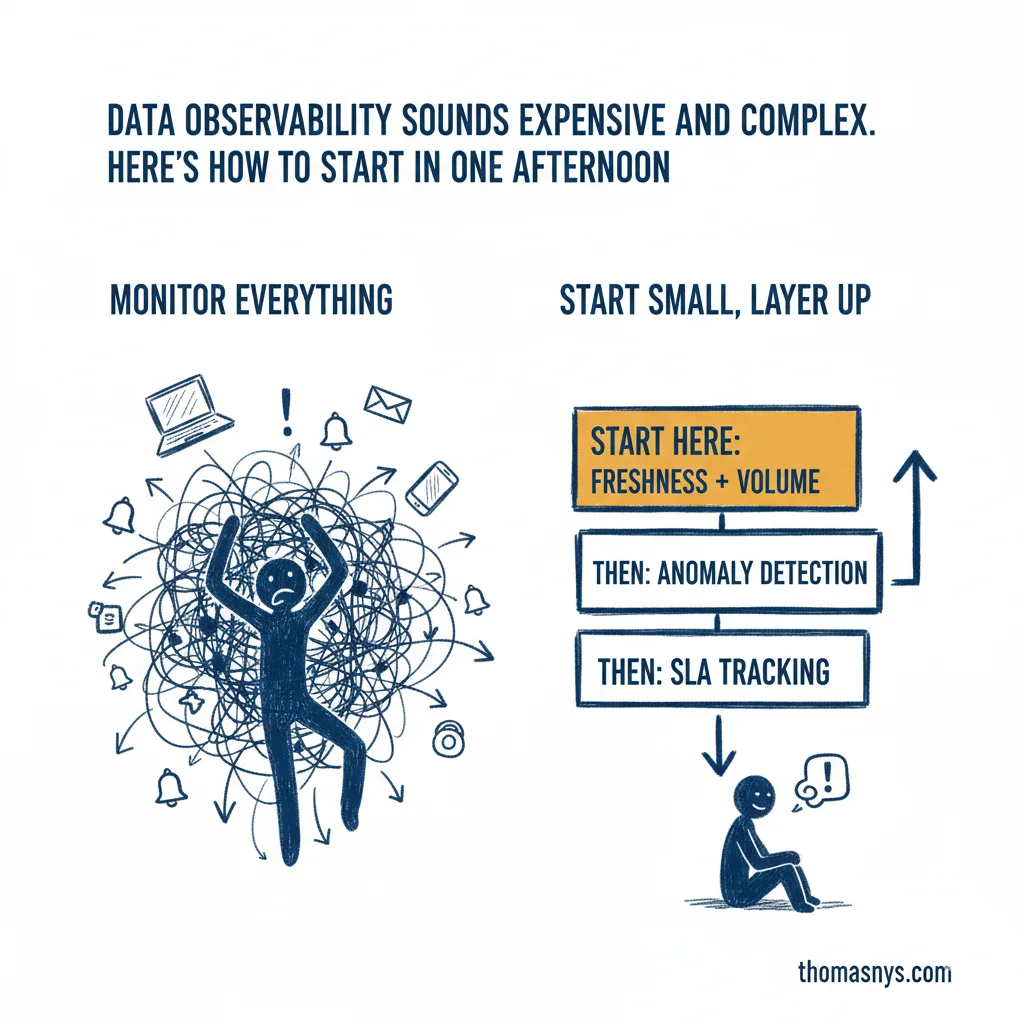

Data observability sounds expensive and complex. Here’s how to start in one afternoon.

Start with business-critical data, not all data.

How to find “business-critical”: ask your stakeholders which reports they’d notice first if the numbers were wrong. Usually it’s revenue, customer counts, or operational metrics that feed executive dashboards. Those tables come first.

Pick 3 of those tables. Monitor freshness and volume. Tools like Monte Carlo, Metaplane, or even dbt’s built-in tests can get you there. That’s your afternoon. You’re already ahead of most teams.

From there, layer up. Add anomaly detection in the following weeks - modern tools use ML to catch unknown unknowns. Then set SLAs. “This table refreshes daily by 9am” becomes a trackable promise.

The key: funnel alerts to one Slack channel with context. No scattered emails. No mystery pings. One place, clear signal.

This isn’t some six-month transformation project. Prove value early, expand gradually. I’ve seen teams set up basic monitoring on a Friday afternoon and catch their first incident Monday morning.

The technical part is straightforward. Deciding which data actually matters - that’s where teams get stuck.

What’s your team’s average time to detect a data incident? Under 1 hour is solid. Over 24 hours means stakeholders are finding your bugs for you.