Your data incident post-mortems end with “be more careful.” That’s not a fix.

I keep seeing the same post-mortem doc circulate after a pipeline blows up. It names who shipped the bad PR, recommends “more code review,” and quietly closes the ticket. Three months later, same incident, different engineer.

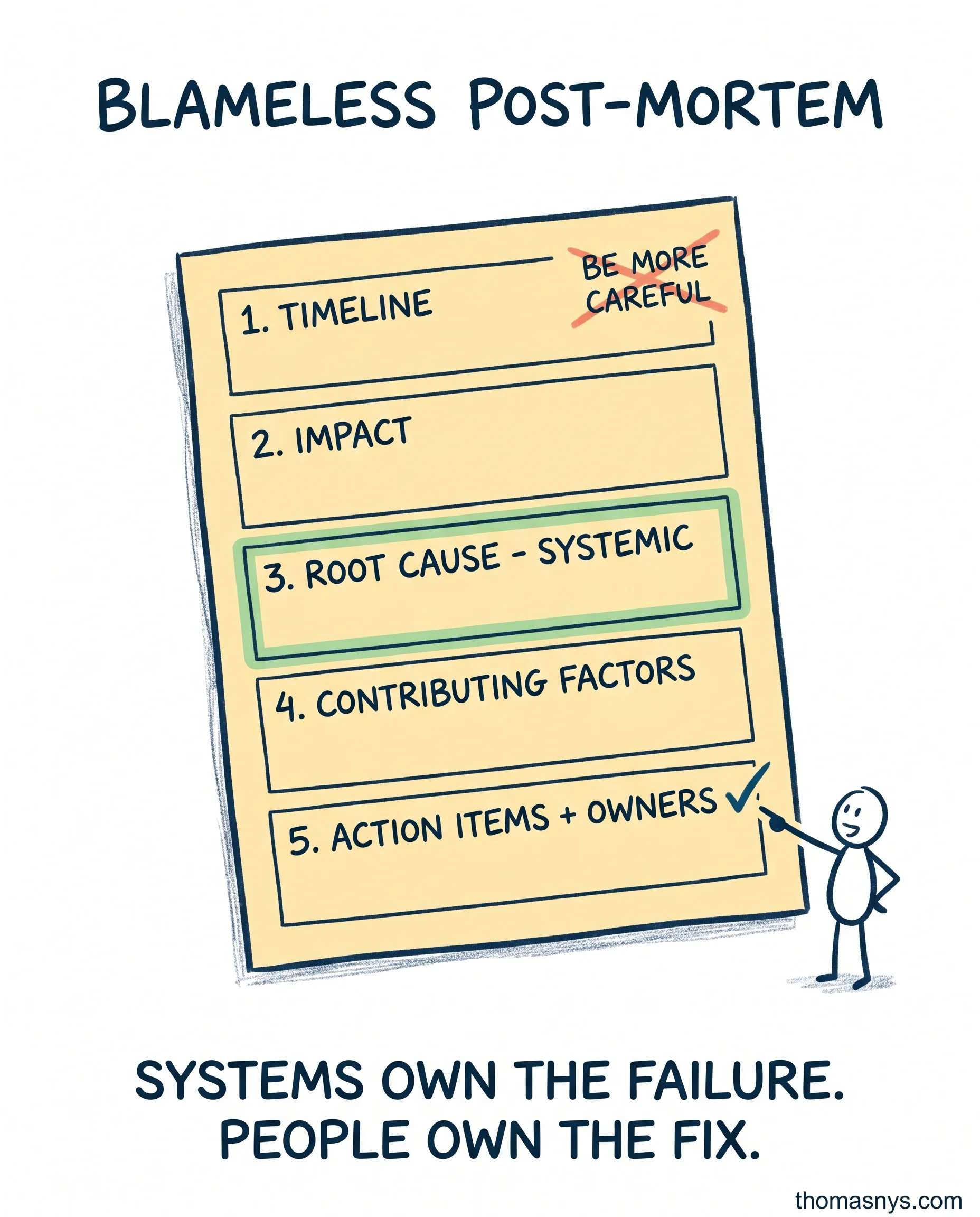

The template I use has five sections, in this order:

- Timeline. Minute by minute, what happened. Pure facts. No interpretation.

- Impact. Who saw bad data, for how long, and what decisions were made on it. EUR or business KPIs where possible.

- Root cause - systemic. Not “Sarah forgot to add a test.” More like “we have no schema validation in CI, and we deploy directly to prod from main.”

- Contributing factors. The other things that lined up. Alerting was off. The runbook was outdated. The on-call person was new.

- Action items with owners and dates. Each one fixes a system, not a person. “Add schema contract tests to CI by May 30, owner: platform team.”

Blameless still demands accountability. The fix is owned by a person. The failure is owned by the system. Mix that up and you either get scapegoating or no follow-through.

The test for whether your post-mortems work: pull last quarter’s reports. How many action items shipped? If the answer is under half, you’re running performance theater.

What was the last data incident your team had? Did the fix stick?